The State of Internet Security in 2019

Earlier this year, we saw 2019 start off in one of the worst ways possible; – the Blank Media Games Data breach. 2019 has been one of the most eventful years in data breaches with rounds (breaches from giants like eVite, Poshmark, and Canva) stemming from GnosticPlayers being made public. We’ve decided to just peak into the world of open databases. Specifically, Elasticsearch. Many other database solutions (NoSQL) default to no authentication, and no security. Meaning anyone who knows which I.P. to connect to, can connect. And we’ve seen the damage & outcome of when they decide to do so.

Why?

We were initially inspired to release this in light of recent events with security researcher Vinny Troia coming across multiple open Elasticsearch instances containing troves of data on everyday people. Most recent being the People Data Labs (PDL) exposing 622 million records, information including email addresses, phone numbers, social media profiles, and job history data. This is not the first time Vinny stumbled on open servers, with more notable instances such as Exactis being discovered on June 1st, 2018 exposing 340 million Records and Apollo on October 5th, 2018 exposing 200 million Records.

We also wanted to see what can be done to better secure people’s information and figure out which organizations were the biggest offenders. While NoSQL Architectures are released defaulting to no security or authorization, it doesn’t mean that they don’t come with a slew of warnings and security recommendations. Such as setting up a firewall and allowing whitelisted I.P.’s, and auth plugins being made based on the open-source software and released to the public for free usage.

The Process

We’ve set up a few scripts that we will not be releasing to the public for obvious reasons, but here’s a rundown of the process we took:

- We scan the entire internet (zmap)

- We parse the results through a custom HTTP Requests script (to ensure validity and get rid servers that have proper authentication protocols)

- And last but not least, running the no-auth required, zero security server list through a custom script to give us some beautiful analytics.

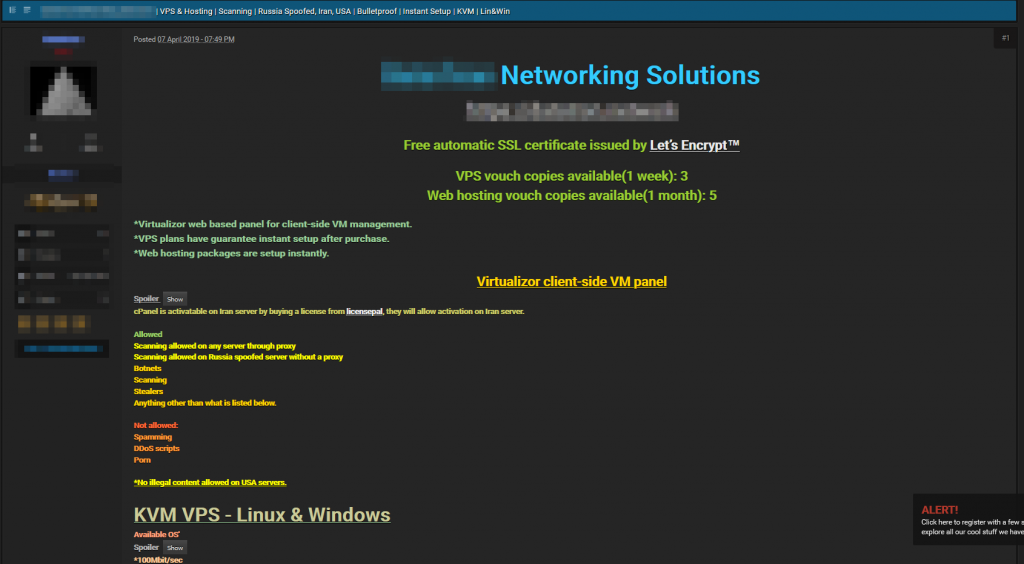

Scanning was the tricky part, while it is not illegal to scan the internet, it is frowned upon. Many hosts disallow scanning and will ban you if you set off specific triggers. We received clearance as security researches to do scanning on a server given to us by an unnamed host.

Too Easy

We initially expected to be shut down pretty quickly, not by the host (as we had clearance) but by various bugs and hiccups. However, this was not the case. We were very quickly able to begin scanning, and within 9 hours, we found every single open Elasticsearch instance that was open to the public. You may be asking yourself how easy is this to reproduce? Well, it’s pretty darn easy. Servers for this kind of activity exist all over the clear and darknet and only cost mere dollars to rent.

Execution

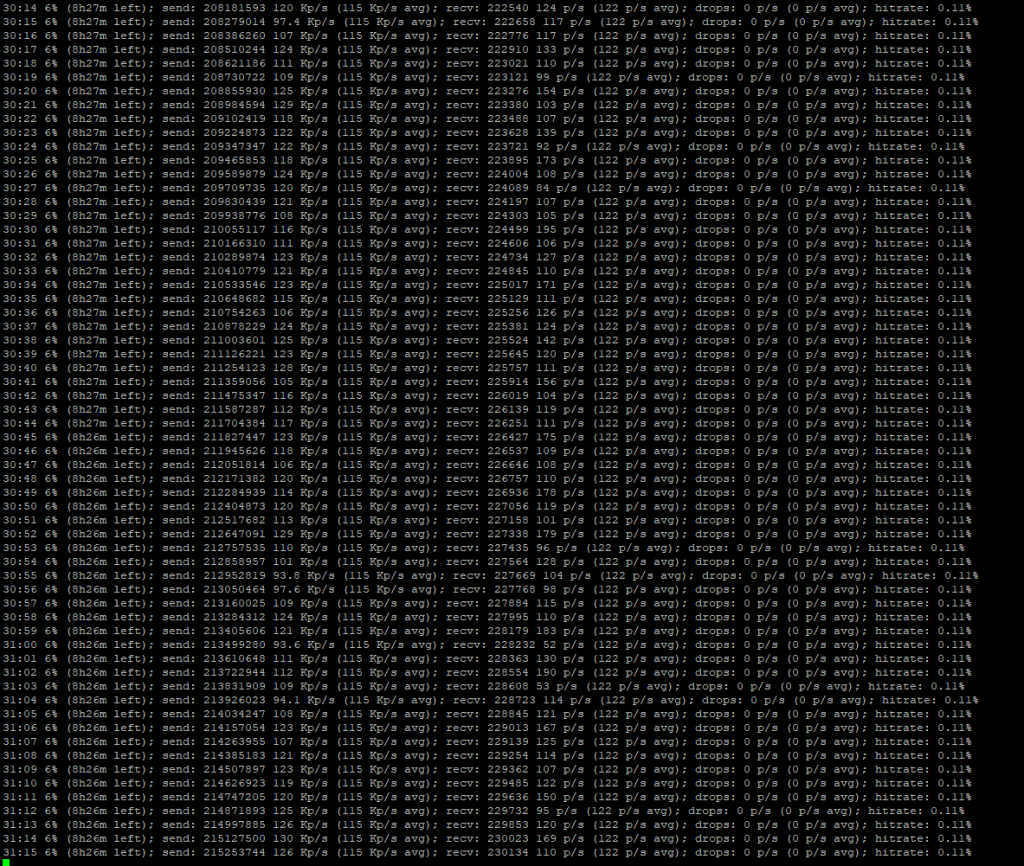

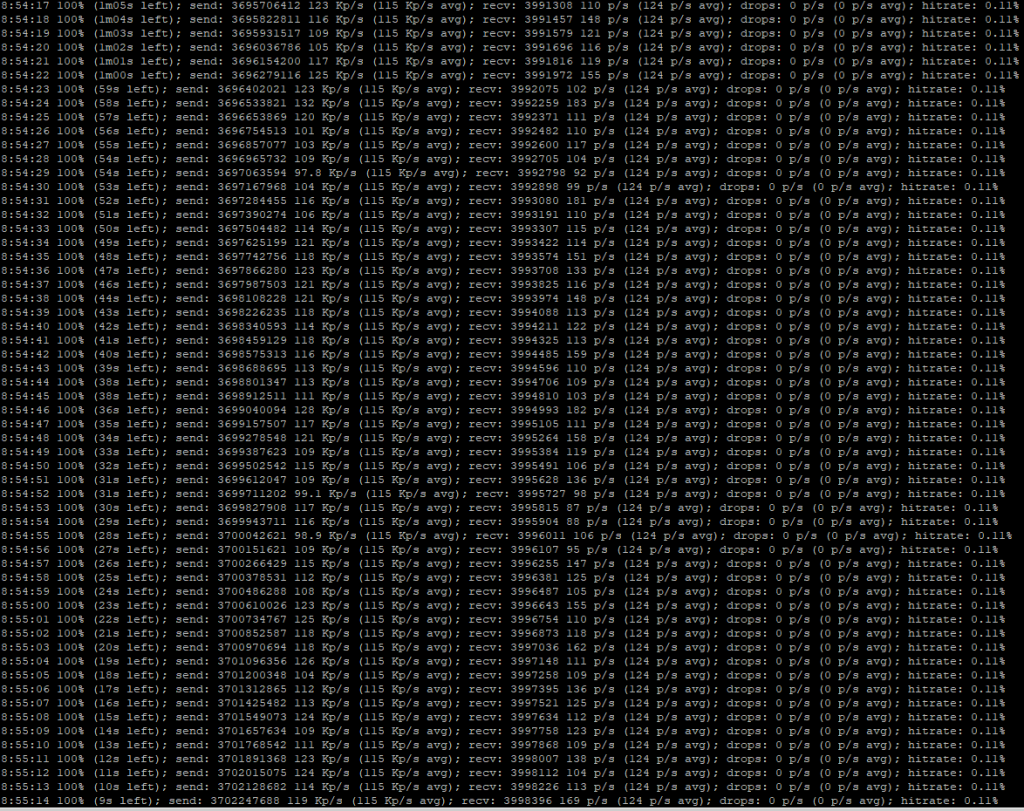

After running our Scanning server, it took only 8 hours to complete the entire scan of the internet, revealing a total of ~4M Servers with port 9200 in use. This doesn’t mean that there are ~4M Elasticsearch instances, as there might be other processes running on this port, and some Elasticsearch clusters have proper security (running on a different port or not being open to the public internet at all).

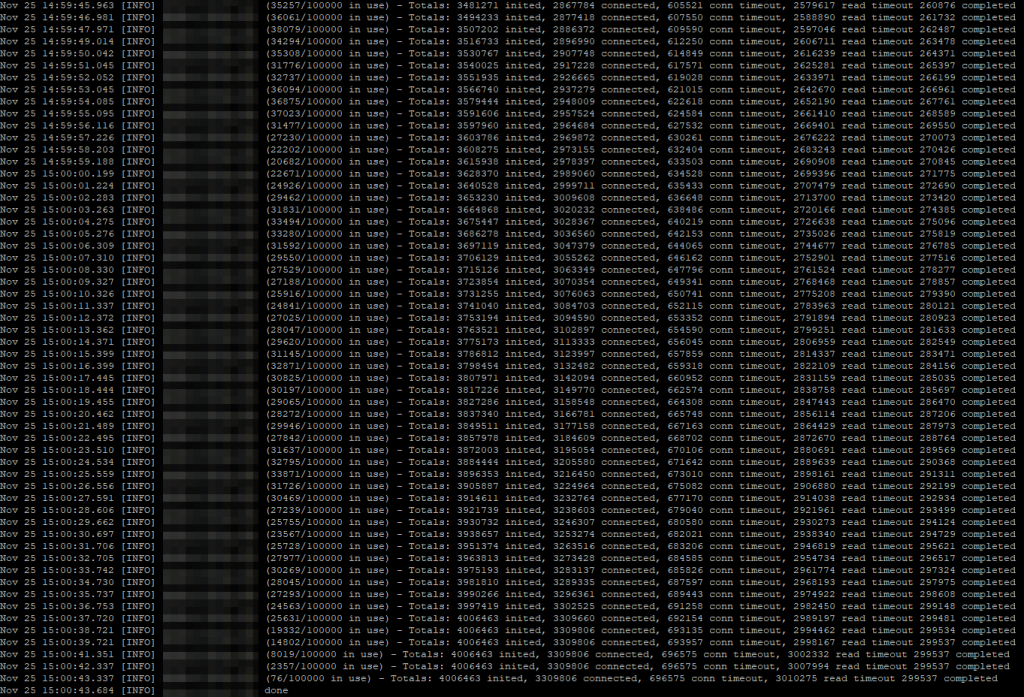

The next step was checking which one of these servers was using Elasticsearch, and which one of these was having Zero Authentication. We use a simple script to query the servers via HTTP headers.

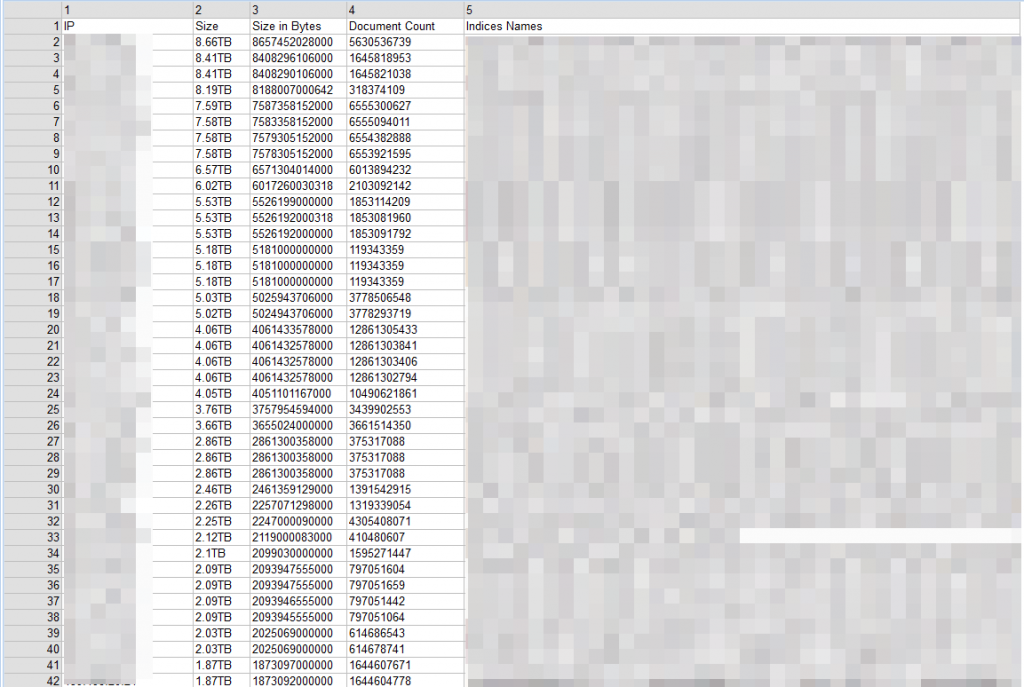

The final step was to parse the results, which was easiest. Out of ~330 thousand open servers, only 57,296 servers contained over 1MB of data.

Numbers

- Total Elasticsearch Servers Open To Public: 299,540

- Total Open Elasticsearch Servers With Data: 57,296

- Total Size Of Publicly Accessible Data: 462 TB

- Total Document Count Of Publicly Accessible Data: 621,362,063,812

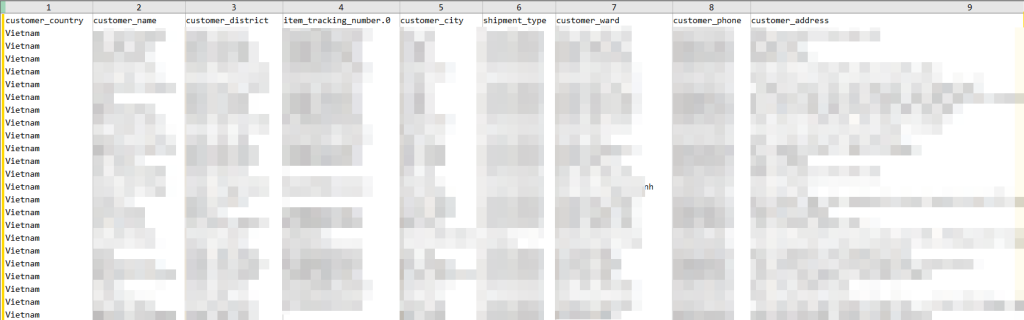

Don’t fret, too much. A lot of those lines are just logs, but the issue is for those that aren’t, for example, this server we found containing 50 million customers for a popular online retailer (Email, I.P., Phone, Name, Address, Order Information):

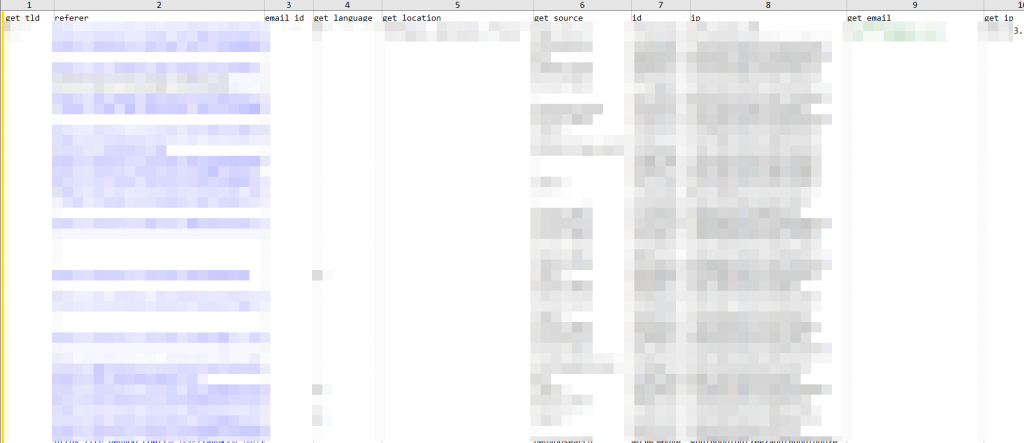

Or this Leads server exposing over 3B lines of Emails, I.P., Names, Address, Internet Activity, Interests, Education, Job History, Income, and many more data points:

This is just the tip of the iceberg, we found servers with Facebook profile scrapes pushing past 3B records, images, names, emails that are publicly visible on profile, phone numbers, social media, and much more.

What Now?

Our next step is to index all of these servers into a database so we get some analytics. Our founding principles are based on reducing security risk for not only our users but all users of the internet, so to stay consistent with those ideas we’re currently working on notifying owners of these servers of the possible security risks. We plan to do this by creating a script to automatically query WHOIS records and send emails to the servers’ respective owners. This shouldn’t be an issue in 2019 though. Hopefully going into 2020 companies and data harvesters will take better care of their back ends moving forward. It’s only a matter of time before someone with malicious intent stumbles upon this data.